Most times our source Tables differs from our Destination Table, but in other times it doesn’t.In a scenario where our source table is the same as our destination, then we might come into a situation where our source table could be changed in terms of data type or length or even a new column added.

Make data easy with Helical Insight.

Helical Insight is the world’s best open source business intelligence tool.

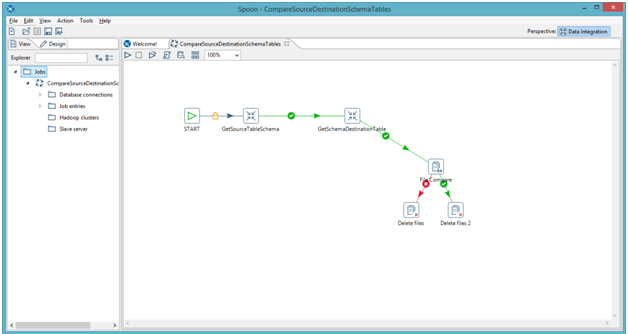

So hence this blogs shows how we can make life easy by having a simple Job that runs in a loop on all the similar tables in both source and destination, to check if there is a change in the schema

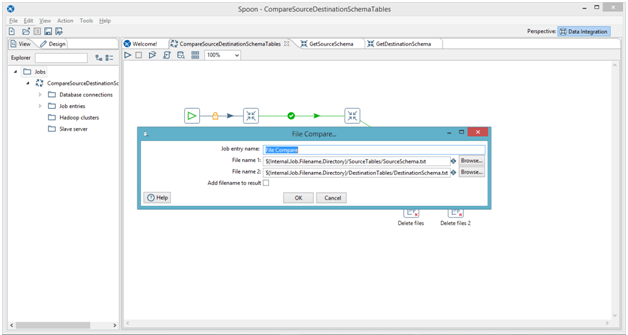

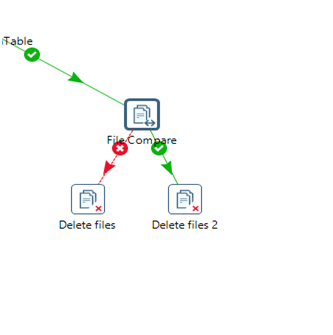

Here we are using simple Job to perform the comparison of the Source Table with the destination table.

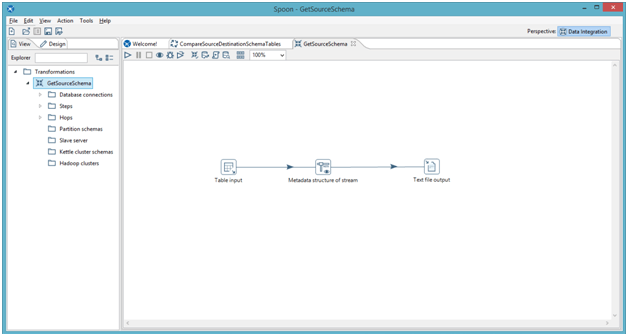

In the GetSourceSchema:

This uses a table input to run a Query Limit 1 i.e

SELECT * from person_employment,

Note: Here person_employment can be parameterized such that we can run the tables comparison in Loop and the name person_employment can be used as the Schema File Name (as we go further you will get a clear picture).

And then we use a Metadata structure of stream which takes the metadata of the Table and prints it in a text file which we will need for comparison

Make data easy with Helical Insight.

Helical Insight is the world’s best open source business intelligence tool.

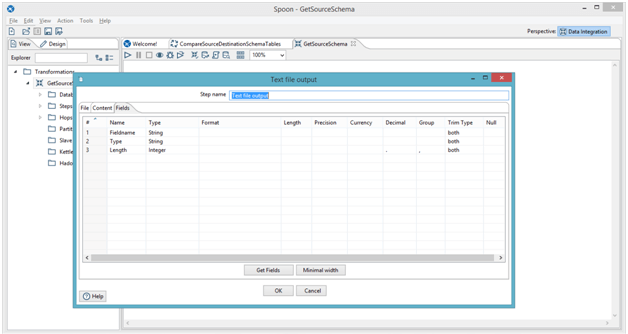

So from the Metadata Stream, we get a lot of Fields but the one I needed to compare is just the field name because I assume the source and destination field name are the same, other fields are Type and Length.

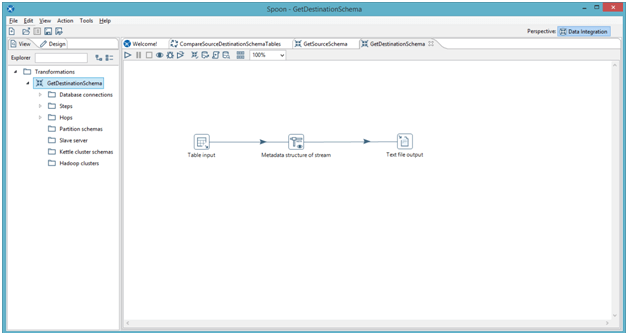

The same goes for GetDestinationSchema

Parametrizing the tables is a good idea so we can save the Text files with a unique name each time. And then pass/use the parameterized text name to compare.

If they match you can change the direction on what you want to do next if it fails you can send a mail to saying hey this table doesn’t match.

So a lot we can do, to loop you can reference: Loops in Pentaho Data Integration or Loops Pentaho Data Integration 2.0. This whole Pentaho Job is just a simple raw Job, which can be tailored to how you want it.

Make data easy with Helical Insight.

Helical Insight is the world’s best open source business intelligence tool.

Best Open Source Business Intelligence Software Helical Insight is Here

A Business Intelligence Framework

In case if you have any queries please get us at support@helicaltech.com

Thank You

Sohail Izebhijie

Helical IT Solutions Pvt Ltd

Hi. Thanks for sharing the valuable information. I found this article very helpful. Would love to read more from you. Keep up the good work.

I have read a similar article on Pentaho vs. Talend: How the Two Data Integration Tools Compare?. Please do check it out.