Issues while implementing loops in Pentaho Data Integration

Generally for implementing batch processing we use the looping concept provided by Pentaho in their ETL jobs. The loops in PDI are supported only on jobs(kjb) and it is not supported in transformations(ktr).

Make data easy with Helical Insight.

Helical Insight is the world’s best open source business intelligence tool.

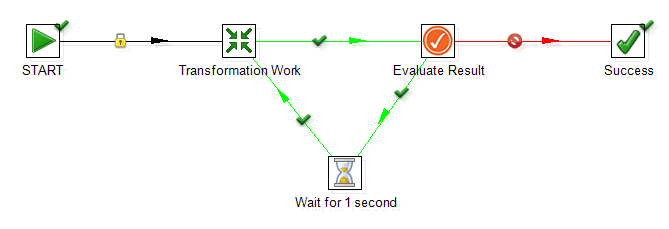

While implementing loops in PDI, we have come across many blog suggesting us to use “Wait For” step and join the output hop to the previous step. Look into the below screenshot for more clarification,

However the limitation in this kind of looping is that in PDI this causes recursive stack allocation by JVM during job execution and the system may run out of memory after a high number of iterations (depending the system available available memory). While implementing this, the JVM may run out of memory and the program crashes. So it is not advisable to implement to have higher number of iterations while implementing loops in PDI.

Possible Solutions:

1. The first thing you have to take is to minimize the number of iterations. The looping works properly up to 500 iterations. Try reducing it to less than 500 iterations.

2. Never use loops for scheduling. For scheduling purposes if we use the looping concept, it goes into an infinite loop which crashes the whole program.

3. Increase your batch size so that number of iterations is less. While implementing external batch processing, take this thing into consideration.

4. For incrementing the value, it is advisable to use another separate transformation instead of a javascript because the javascript cosumes more memory compared to a separate transformation. Create a new transformation, use the formula step to increment the values and then set those variables.

5. Suggested approach for infinite looping – One of the possible way is to use the settings of ‘Start’ step. Set the ‘Repeat’ flag and add interval configuration. This cause the job to be re-initialize completely as a new instance and does not cause any memory issue.

Thanks,

Nitish Kumar Mishra

Best Open Source Business Intelligence Software Helical Insight is Here